How ChatGPT, Google AI Overviews, and Perplexity Source Information in 2026

Why AI Search Citations Are the New Position Zero

For a decade, position zero meant a featured snippet at the top of a Google results page. In 2026, it means something harder to earn and more valuable to hold: a citation inside an AI-generated answer.

Google AI Overviews now appear in roughly 50% of all searches. ChatGPT handles over 200 million weekly active users. Perplexity has become the default research tool for analysts, journalists, and technical buyers. When these platforms answer a question, they cite a small handful of sources — and everyone else is invisible.

The traffic stakes are real. Pages cited in AI Overviews earn 35% more organic clicks than non-cited competitors on the same results page. Visitors arriving from Perplexity convert at roughly 11 times the rate of traditional organic search traffic. And across all AI platforms combined, AI search visitors generate a disproportionate share of signups relative to their traffic volume.

This is why AI search citation strategy has moved from an experimental initiative to a business-critical priority. But there's a critical mistake most brands make: they treat these three platforms as a single channel and optimize accordingly. The data says otherwise.

The Three Platforms Don't Work the Same Way — Here's the Data

An analysis of 680 million citations across ChatGPT, Google AI Overviews, and Perplexity reveals something that should change how you think about generative engine optimization (GEO): only 11% of domains are cited by both ChatGPT and Perplexity. Each platform operates on fundamentally different citation logic.

A separate 2026 study of 34,234 AI responses found a 46-times difference in brand citation rates between platforms — ChatGPT cited brands just 0.59% of the time while Perplexity sat at 13.05%. Grok came in even higher at 27%.

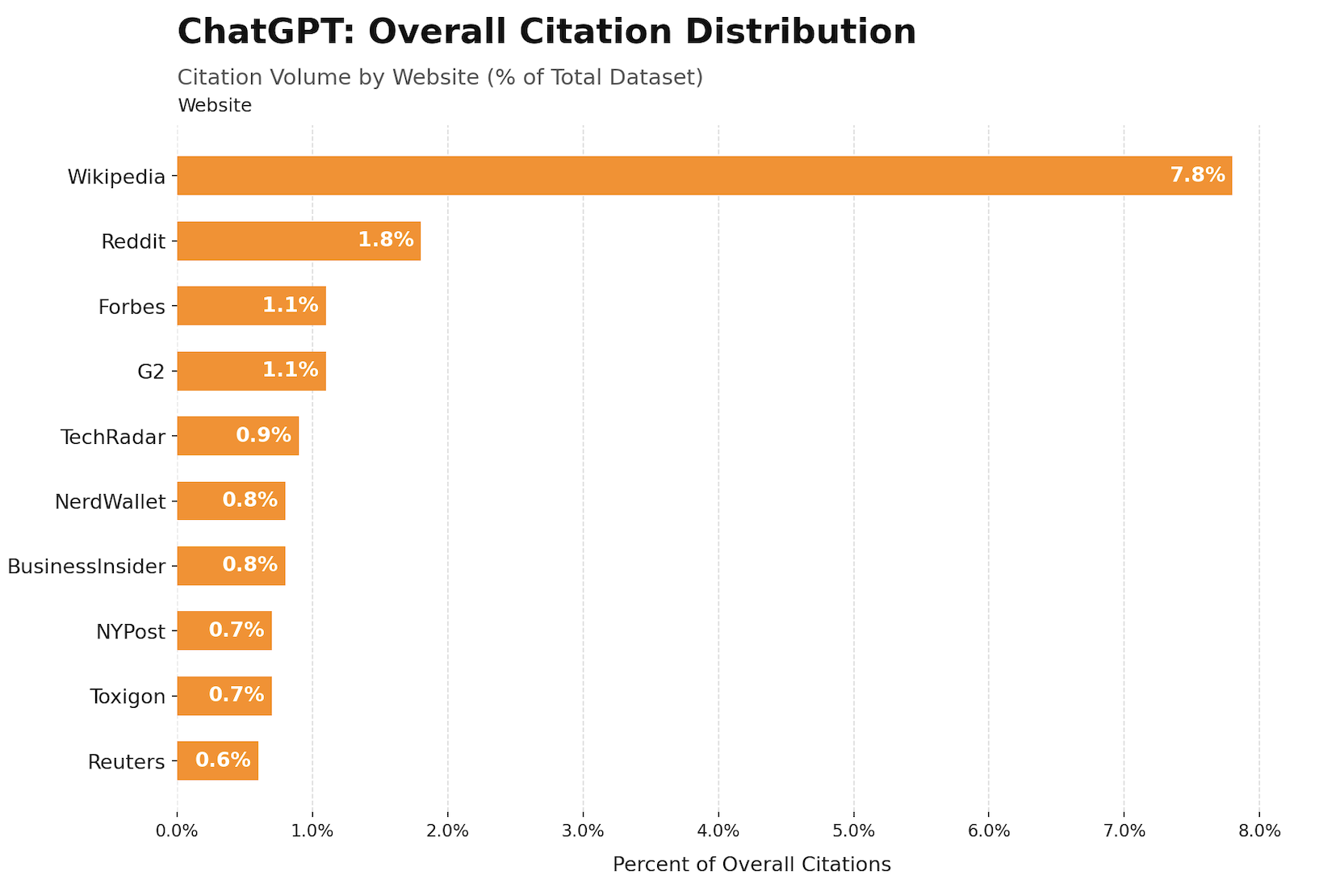

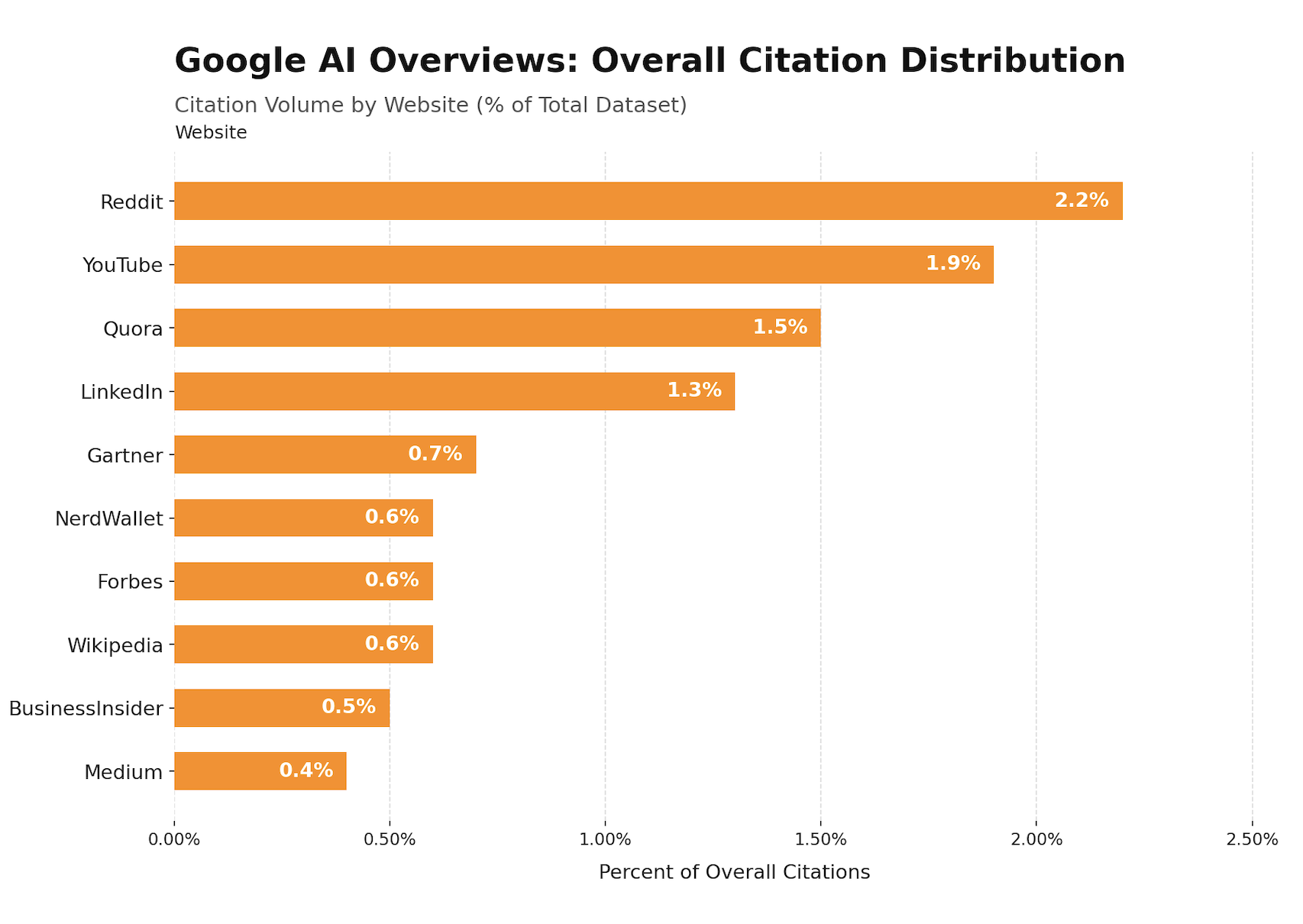

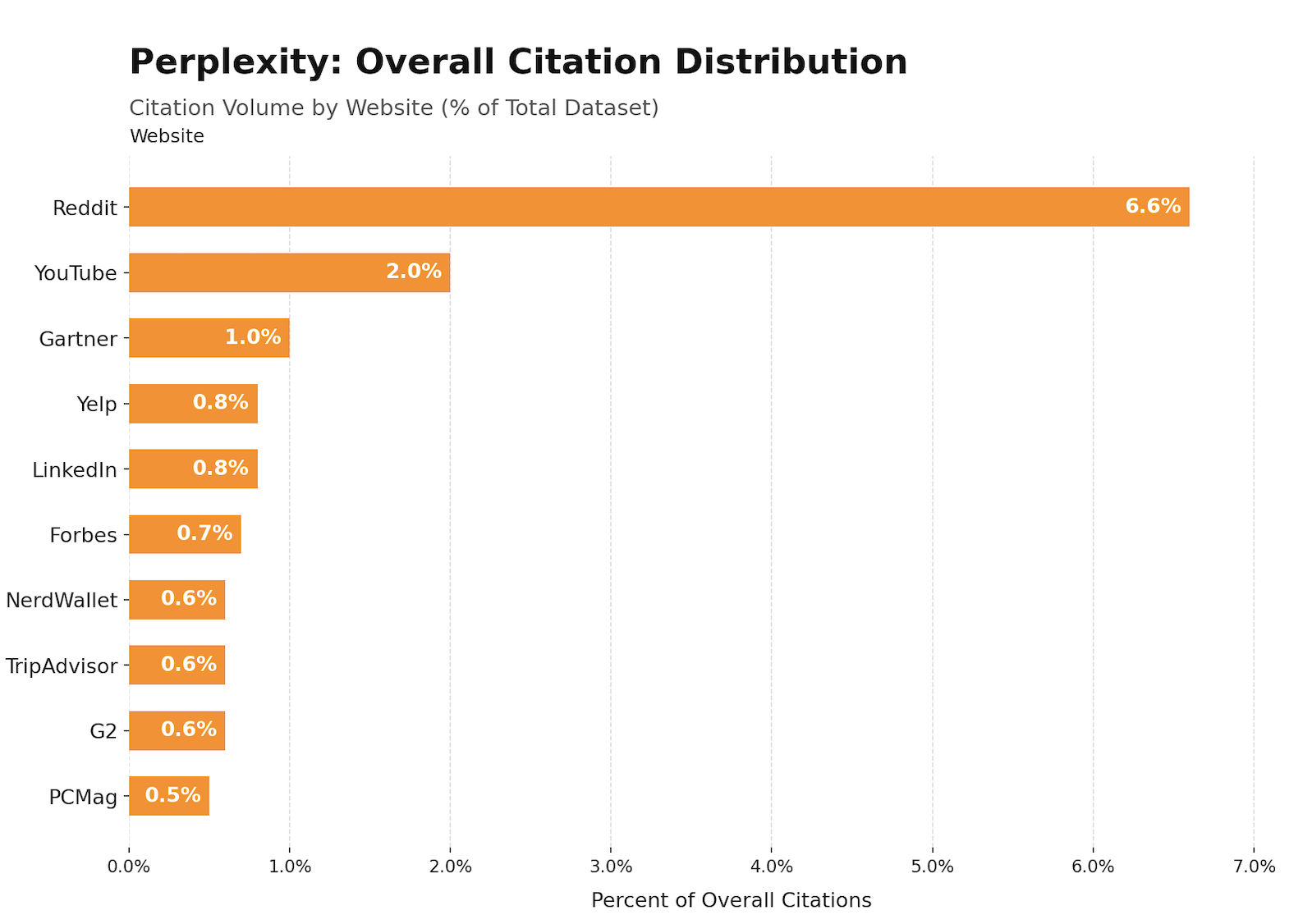

The table below shows the leading citation source for each platform and what it signals about content strategy:

| Platform | #1 Cited Source | Core Sourcing Philosophy |

|---|---|---|

| ChatGPT | Wikipedia (7.8% of all citations) | Encyclopedic authority, training-data depth |

| Google AI Overviews | Reddit (2.2%) | Balanced social + professional mix |

| Perplexity | Reddit (6.6%) | Real-time community + recency |

Optimizing for "AI search" as a single category is like running the same campaign on LinkedIn and TikTok. The underlying mechanics are different — and so is the winning strategy for each. Here's how each one actually works.

How ChatGPT Sources and Cites Information

ChatGPT Search operates on a two-layer system. The base layer is its training data — vast, static, and built from web content crawled before the model's cutoff. The retrieval layer is Bing-powered, activated primarily for commercial-intent queries: searches that include terms like "reviews," "comparison," "features," or a year like "2026."

One study found that commercial-intent prompts trigger web search in ChatGPT 53.5% of the time, compared to just 18.7% for informational queries. This matters for strategy: if you want to be cited when someone asks "what's the best LinkedIn content tool," you need Bing visibility, not just brand mentions in training data.

ChatGPT's citation process is highly selective. Research shows it retrieves multiple candidate pages per query but cites only 15% of the pages it actually retrieves. Selection favors content the model can parse cleanly: structured H1/H2/H3 headings, direct-answer formatting, FAQ schema, and cited claims within the text itself. Pages with FAQ schema and inline citations receive approximately 40% higher citation weighting in ChatGPT source selection than pages without these elements.

On the domain authority side, ChatGPT relies heavily on third-party signals. Domains with active profiles on platforms like G2 or Capterra show 3 times higher citation probability than sites without such presence. Brand mentions across YouTube and broader web properties are among the strongest correlating factors for ChatGPT AI brand visibility — meaning off-page signals are not optional.

The platform also skews older with its citation history. Approximately 29% of ChatGPT's citations reference content published in 2022 or earlier, reflecting the weight of accumulated training data. This makes brand authority in third-party sources — not just freshly published blog posts — the primary lever for ChatGPT visibility.

What ChatGPT's Citation Bias Toward Encyclopedic Content Means for You

ChatGPT's Wikipedia dominance (47.9% of top citations among its leading sources) signals what the model trusts: factual, comprehensive, neutrally-framed content that covers a topic thoroughly without being overtly promotional.

For B2B brands, this translates to a specific content type: definitive topic guides that establish your brand as the authoritative source in a category. Content that defines a concept, organizes it with clear headings, and answers real questions in the opening paragraphs gives ChatGPT the "extractable" format it prefers. AI systems don't favor pages that require a reader to scroll for the answer — they favor pages that front-load it. Research confirms that 44.2% of all LLM citations come from the first 30% of page content.

How Google AI Overviews Select Their Sources

Google AI Overviews are powered by Gemini and pull from Google's existing organic search index — but the relationship between organic ranking and AI citation has fundamentally changed.

In mid-2025, roughly 76% of pages cited in AI Overviews also ranked in the top 10 organic results for the same query. By early 2026, separate Ahrefs research placed that figure at approximately 38%, while BrightEdge data put it even lower at around 17%. The direction is unmistakable: traditional organic ranking is becoming a weaker predictor of AI Overview citation, not a stronger one.

Seven factors consistently determine AI Overview citation selection based on analysis of nearly 16,000 AI Overview results across 63 industries. Semantic completeness — whether content provides a complete, self-contained answer without requiring external context — is the strongest predictor, showing a correlation of r=0.87. Multi-modal content integration (text combined with images or video) shows 156% higher selection rates versus text-only content. Real-time factual verification, with content linking to verifiable data and cross-referenced sources, correlates at r=0.89. Structured data markup contributes a 73% selection rate improvement over unstructured pages.

One structural signal deserves particular attention: LinkedIn itself appears in Google AI Overviews' top 10 cited sources at a 13% share of the platform's leading citations. For B2B brands, this means that well-structured LinkedIn content — company pages, long-form articles, and profile authority — has a direct path into Google AI Overview citations, not just LinkedIn's internal algorithm.

Why Ranking #1 No Longer Guarantees an AI Overview Citation

The decoupling of organic rankings from AI Overview citations has a specific cause: Google's AI isn't just looking at the top-ranked page for a query. It's looking for the page that best answers the question, regardless of position.

Ahrefs data shows that 47% of AI Overview citations in 2025 came from pages ranking below position 5 in organic search. SeoClarity's analysis of 432,000 keywords found that 97% of AI Overviews cite at least one source from the top 20 organic results — not the top 10, the top 20. And critically, YouTube has emerged as one of the most-cited sources in AI Overviews, with Ahrefs research on 75,000 brands finding that brand mentions in YouTube video titles and transcripts are the single strongest correlating factor with AI Overview visibility among all signals studied.

The practical implication: ranking well still helps, but it's no longer sufficient. Content structure, E-E-A-T signals, and multi-channel brand presence now matter more than position alone.

How Perplexity Sources Information in Real Time

Perplexity is architecturally different from the other two platforms — and that difference changes everything about how to earn citations there.

Unlike ChatGPT's hybrid of training data and selective web retrieval, Perplexity performs a real-time web search for every single query. It draws from multiple search APIs including Google and Bing, retrieves and reads candidate pages, and synthesizes a detailed answer with inline numbered citations. There is no knowledge cutoff. New content can be cited by Perplexity within hours of being indexed.

This real-time architecture explains Perplexity's behavior with fresh content. The platform cited content published within the last 30 days at an 82% rate in one 2026 analysis. A blog post from six months ago consistently loses to a fresh piece on the same topic. Visible year signals — including "2026" in titles and headings — improve citation rates by approximately 30%.

Perplexity also averages 21.87 citations per response, the highest of any major AI platform. That high citation count is a structural opportunity: Perplexity cites nearly three times as many sources per response as ChatGPT, meaning the competition for each individual citation slot is lower.

The platform's content preferences are specific. Research shows Perplexity favors pages with structured H2/H3 headings organized around specific questions, visible statistics and proprietary data, named sources with verifiable methodology, and content that cites other authoritative sources (building what researchers describe as "a web of mutual verification"). Reddit accounts for 46.7% of Perplexity's top citation sources — not because Reddit is inherently authoritative, but because it represents exactly what Perplexity is tuned to surface: real people answering real questions in communities built around a topic.

For B2B brands, this means earned media has a near-real-time path to Perplexity citations. Product reviews on G2, comparison articles, press mentions — all are indexed live and surfaceable within hours.

Why Perplexity's Citations Drive the Highest-Converting Traffic

Perplexity's user base skews toward journalists, analysts, investors, and technical professionals — people who are actively researching before making decisions. Every citation in Perplexity is an inline linked reference, meaning a citation equals a direct clickable referral to your website.

The conversion data reflects this buyer quality. LLM referral traffic converts at 1.66% for signups compared to 0.15% from traditional organic search — roughly an 11-times improvement — according to aggregated LLM traffic studies. Despite accounting for only 15-20% of AI referral volume, Perplexity delivers the highest ROI per citation earned of any platform. For B2B brands and founders targeting high-intent buyers, Perplexity visibility is increasingly as important as Google visibility.

The Universal Citation Signals That Work Across All Three Platforms

Despite their differences, ChatGPT, Google AI Overviews, and Perplexity share a core set of signals that improve citation probability across all three. Understanding how AI platforms rank content starts here.

Semantic completeness. AI platforms favor content that provides a complete, self-contained answer without requiring the reader to go elsewhere. If a paragraph extracted from your page wouldn't make sense in isolation, restructure it to stand alone. Front-load your answer in each section — the direct response first, supporting context second.

E-E-A-T signals. Author bylines with verifiable credentials, explicit publication and update dates, external citations from authoritative sources, and contributor expertise markers all improve citation probability across platforms. Pages with strong E-E-A-T indicators show 22% higher visibility in AI-generated results. Content freshness is part of this: 53% of content cited in AI search had been updated within the last six months, and pages updated within the past 12 months are twice as likely to earn citations.

Structured data markup. Schema markup — particularly FAQPage, Article, and BreadcrumbList — is no longer optional for AI search visibility. FAQ schema alone correlates with 40% higher citation weighting in ChatGPT and significantly higher citation rates in Google AI Overviews. Citation algorithms scan schema to verify E-E-A-T before selecting sources.

Original data. AI platforms actively prioritize original data that can't be found elsewhere. Including original research, proprietary statistics, aggregated client results, or survey data makes content a citation magnet. Adding statistics can increase AI visibility by 22%; using direct quotations can boost it by 37%.

Earned media distribution. Distributing content to a wide range of publications can increase AI citations by up to 325% compared to publishing only on your own site. Ninety percent of AI citations driving brand visibility originate from earned and owned media, not paid placements.

Where Content Position on the Page Actually Matters

Not all sections of a page carry equal citation weight. Research from Zyppy analyzing thousands of ChatGPT citations found a clear positional pattern: the first 30% of a page — the introduction, any TL;DR block, and the opening section — accounts for 44.2% of all LLM citations. The middle 30–70% contributes 31.1%, and the final 30% accounts for 24.7%.

The practical implication: put your most important facts, your direct answer to the query, and your strongest data points in the first third of every page. A TL;DR block, a direct-answer opening, and a key-facts summary near the top of your content maximizes citation probability before a reader — or an AI — ever reaches the middle sections.

Platform-Specific Optimization Playbook for 2026

Based on the citation mechanics above, here is a prioritized action plan for each platform:

For ChatGPT:

- Build Bing search presence through consistent site updates, schema markup, and technical SEO fundamentals — ChatGPT Search retrieves via Bing

- Earn reviews and mentions on third-party platforms (G2, Capterra, industry directories) to establish off-page authority signals

- Structure every page with semantic HTML (H1/H2/H3 hierarchy), FAQ sections, and direct-answer blocks at the top of each major section

- Build topic clusters, not standalone posts — topical authority across a subject area is a stronger signal than any individual page

- Target the queries that trigger ChatGPT web search: comparison, review, and feature-focused prompts with commercial intent

For Google AI Overviews:

- Prioritize semantic completeness over keyword matching — AI Overviews reward pages that answer the full question, not just match the query

- Invest in multi-modal content: pages combining text with images, video, or structured tables show 156% higher AI Overview selection rates

- Ensure technical SEO fundamentals are solid, since organic ranking still correlates with AI citation probability, even if the relationship has weakened

- Create and maintain a YouTube presence — brand mentions in video titles and transcripts are the strongest single correlating factor with AI Overview visibility among all measured signals

- Keep content current: pages not updated quarterly are three times more likely to lose AI Overview citations

For Perplexity:

- Publish fresh content consistently — prioritize regular publication over occasional long-form pieces

- Structure content with question-format H2/H3 headings that mirror how users phrase queries

- Include verifiable statistics, named sources, and methodology — Perplexity rewards content that creates a web of mutual verification

- Build presence on Reddit and review platforms in your niche — earned media has a near-real-time path to Perplexity citations

- Use llms.txt and ensure PerplexityBot is not blocked in your robots.txt or WAF rules

How Brand Authority and Consistent Content Drive AI Visibility

AI citation platforms don't just evaluate individual pages — they evaluate brand authority as a signal. Research shows brand search volume is one of the strongest predictors of LLM citations, outweighing the impact of traditional backlinks. Brands in the top 25% for web mentions receive 10 times more AI visibility than those in the bottom quartile.

This is where LinkedIn content strategy becomes directly relevant to AI search visibility. LinkedIn appears in Google AI Overviews' top 10 cited sources, and consistent, well-structured content published under a recognizable brand identity contributes to the brand authority signals that all three AI platforms measure. Founders and B2B professionals who maintain a regular LinkedIn presence aren't just building an audience — they're building the kind of distributed brand signal that AI citation algorithms reward.

The connection between consistent publishing and AI citation probability is also structural: content freshness is a major ranking factor across seven tested AI models. Brands that publish regularly give AI platforms a reason to return to their domain. Those that publish sporadically — or let their key pages go stale — fall behind competitors who treat content publishing as a system, not an occasional project.

Tools like Cassy, Leapd's LinkedIn AI coworker, address exactly this problem for B2B creators and founders. Cassy learns your brand voice, researches your ICP and trending topics in your niche, and turns that research into a consistent flow of LinkedIn posts — all through a natural conversational interface. Most tools help you post. Cassy helps you convert: turning LinkedIn activity into booked meetings and pipeline while simultaneously building the brand authority that AI search platforms use to select citation sources.

For teams managing AI search visibility at the platform level, Alex — Leapd's AI search visibility agent — tracks how your brand appears across ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews, audits your site for AI readiness, and delivers a prioritized action plan to close the gaps.

Frequently Asked Questions About How AI Platforms Source Information

Do ChatGPT, Perplexity, and Google AI Overviews use the same sources?

No — and the overlap is smaller than most people expect. Analysis of 680 million citations found that only 11% of domains are cited by both ChatGPT and Perplexity. Google AI Overviews and Google AI Mode cite the same URLs only 13.7% of the time, despite reaching similar conclusions. Each platform has distinct source preferences, citation mechanics, and content signals. A brand that ranks well in Google AI Overviews may be completely invisible in Perplexity or ChatGPT Search.

Does ranking #1 on Google mean I'll appear in AI Overviews?

Not automatically. In mid-2025, 76% of AI Overview citations came from top-10 organic results. By early 2026, that figure had dropped to 38% in Ahrefs data and as low as 17% in BrightEdge research. Semantic completeness, structured data, E-E-A-T signals, and multi-modal content now influence AI Overview selection independently of ranking position.

Why does Perplexity cite Reddit so heavily?

Perplexity's real-time retrieval architecture surfaces content that matches how users phrase questions — and Reddit threads naturally mirror conversational query language. Reddit accounts for 46.7% of citations among Perplexity's top sources because it consistently provides authentic, experience-based answers in community contexts that align with what Perplexity is optimized to surface. This signals that building a community presence — or earning mentions in existing communities — is a direct path to Perplexity visibility.

Does schema markup actually help with AI citations?

Yes, measurably. FAQ schema correlates with approximately 40% higher citation weighting in ChatGPT. Structured data markup shows a 73% improvement in AI Overview selection rates. Citation algorithms scan schema to verify E-E-A-T signals before choosing sources. Pages with proper FAQ schema, structured author credentials, and explicit expertise markers consistently outperform equivalent pages without schema.

How quickly can new content get cited by AI platforms?

It varies by platform. Perplexity performs real-time web retrieval for every query, meaning new content can appear in Perplexity citations within hours of being indexed by Google or Bing. Google AI Overviews draw from the existing organic index, so citation eligibility follows indexing timelines. ChatGPT's training-data base is static between model updates, but its Bing-powered retrieval layer can surface new content for commercial-intent queries relatively quickly after indexing.

What content type is most likely to earn AI citations in 2026?

Original data and proprietary research are the highest-leverage content type across all three platforms. Case studies and pricing pages outperform top-of-funnel "what is" or "how to" guides for driving AI-referred traffic. Data-backed topic guides with FAQ schema, verifiable statistics, structured headings, and regular updates are the format most consistently cited across ChatGPT, Perplexity, and Google AI Overviews.

Start Getting Cited Before Your Competitors Do

The brands winning AI search visibility in 2026 aren't necessarily the ones with the largest content libraries or the highest organic rankings. They're the ones who understand that ChatGPT, Google AI Overviews, and Perplexity each operate on different citation logic — and who've built platform-specific strategies to match.

The foundation is the same across all three: structured content, verified authority, consistent publishing, and a brand presence that extends beyond your own site. Start by auditing where you stand across every AI platform, identify the gaps your competitors are filling, and build the content and citation infrastructure to close them — before the next model update reshapes the landscape again.

Want to see where you stand right now? Check your AI visibility for free — no signup, no credit card. Just enter your URL and see how ChatGPT, Gemini, Perplexity, and Google AI talk about your brand.